Interpreting Web Visitor Statistics

Summary : Website statistics are commonly misunderstood by most webmasters, site visitors, and in particular the media.

Recommendation : A webmaster should

be very careful in how they interpret web visitor statistics. Any

presentation of data in support of a websites popularity should be in an honest

manner- 100,000 hits will usually not equal 100,000 people. All data should be

clearly described.

The following guide is based upon usage of

Webalizer Version 2.01

, as provided as part of the standard Phpwebhosting service.

Index

Website Statistics - the Basics

Problems with Hit counters

Making the most of website

statistics

Robots - implications for

visitor data

Net Links

Summary

Website Statistics - the Basics

A few basic concepts should at least be understood about interpreting website statistics. Note that definitions may vary depending on the type of statistical package that you have on your host server. The following notes apply to phpwebhosting web service, but should also be applicable for most web hosts.

-'Hits' : No' of request to the server by visitors

A web page may contain numerous

opportunities to register a 'hit'. A page may contain, 3 pictures, a header gif

image, a few strap lines. Typically if a visitor has requested a page for the

first time, many 'hits' may register, even though they are requesting just one

page.

The notion of multiple hits per web page is GREATLY overlooked by people. Just

because a site has registered many hits, does not mean it has been visited by a

great many people.

-'Files' : No' of times that the server sends data to the visitors

computer.

-'Sites' : No' of unique IP/host addresses visiting your site

-'Visits' : A visit constitutes,

where a visitor has requested page/s from the server. There is a time-clock

issue to be aware of. The default max. time allowed between requesting pages in

a single visit is <30 mins. Note, if the gap between requesting any given

page is >30mins, then this constitutes a NEW visit.

So, if a web visitor request a page once every 31 mins four times, over 124

mins, then that would register as 4 separate visits.

-'Pages' : Where a page has been requested. For a page to be registered as downloaded, does not mean ALL the graphics necessarily have to be sent. What matters is that the general 'frame' of the page is sent.

Notes

1. Not every hit will result in the server sending the web visitor data,

a. some pages, graphics, files, will already be in the

visitors cache (cache may be browser cache, local ISP cache)

b. 404, page not found errors do not register as hits.

2. Repeat visitors : can be discerned by analysing the diff. between the hits

and files totals. The larger the diff. between the two, meaning more of your

visitors are requesting pages that they have ALREADY viewed.

So, a big difference in hits and files means your site has more 'regulars' - and

regulars are always a good thing (aren't they ?)

Okay, so lets take an example. A classic case is where a new website has just sprung up, and the webmaster has stuck a hit counter on the main index page.

Lets say our site is about banning

smoking in public. The anti-smoking site soon attracts media interest, and as

part of a news story, the reporter says "www.nosmoke.com has already

got 50000 hits in just one week, which shows the immense support which exist in

banning smoking in public."

Well, on what basis does the reporter, and indeed the webmaster justify their

statement that the site has huge visitor numbers ?

The only thing on the webpage that indicates supposed high visitor numbers is

the following....

|

|

Screen shot taken March 1'st 2003, from the site www.dont-pay-ntl.co.uk (site now dead)- a typical example of how a webmaster should not be trying to inform us of visitor numbers/site popularity. |

As we can see, from 8/2/03 to

1/03/03, this new protest site has a total hit count of 117718. But does this

mean 117718 different people have visited ?

NO NO , hell NO !

ALL this figure of 117718 means is this ..... 117718 'hits' for files from the website. 'Files' is the absolute key to web hits (in most cases)

A file could be... a jpg, bmp, or any

image file, an add in web component such as a messenger status program.

Typically, most WebPages will contain at least one or two pictures, and maybe a

strap line/header. So, overall, for each page that someone downloads to view on their

computer, the webserver is 'serving them' a number of files - each of which

registers as a 'hit'.

So, each page downloaded will often register as multiple hits.

Making the most of website

statistics

In this section, we shall briefly look at what CAN be derived from website

statistics.

Might as well use my own web stats, -what better an example could i use ? ;)

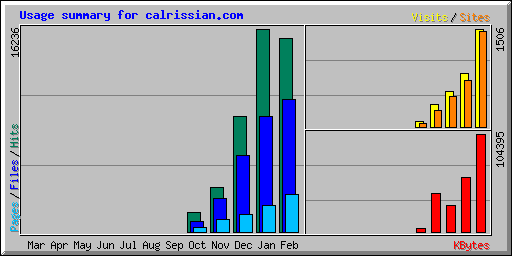

Okay, firstly, with reference to figure 1.0, we have just 5 months of stats.

Well, what if anything can be gained from this ?

1. The 'general trend' is upwards in terms of pages, files, and hits

2. The difference in hits/files ratio

has changed. There were more regulars as a % of total hits/files in Jan, rather

than Dec.

3. Although the number of visitors was sharply up in Feb, the number of 'hits'

actually was less*

*The reason for this was due to a re-structured website (done in late January), less gif-hyperlinks - each of which registered as a hit. Overall number of small gifs/jpgs is sharply down, thus accounting for this anomaly.

4. Total data downloaded shows a

broad increase over the period.

5. Visit numbers are broadly in sync with total site numbers.

Figure 1.0 : Calrissian.com web data Oct'02-Feb'03

Figure 1.1 : Calrissian.com Data

table Oct'02-Feb'03

| Summary by Month | ||||||||||

|---|---|---|---|---|---|---|---|---|---|---|

| Month | Daily Avg | Monthly Totals | ||||||||

| Hits | Files | Pages | Visits | Sites | KBytes | Visits | Pages | Files | Hits | |

| Feb 2003 | 552 | 378 | 106 | 53 | 1464 | 104395 | 1506 | 2981 | 10602 | 15472 |

| Jan 2003 | 523 | 297 | 68 | 26 | 710 | 57595 | 817 | 2131 | 9218 | 16236 |

| Dec 2002 | 298 | 198 | 45 | 17 | 467 | 28272 | 548 | 1409 | 6153 | 9261 |

| Nov 2002 | 119 | 90 | 32 | 11 | 249 | 40966 | 346 | 973 | 2703 | 3580 |

| Oct 2002 | 85 | 44 | 19 | 4 | 49 | 3432 | 81 | 351 | 808 | 1533 |

| Totals | 234660 | 3298 | 7845 | 29484 | 46082 | |||||

Well, Figure 1.1 gives a simple

summary of typical results from a starter website that is less than a year old.

Numbers are small across the board, although a discernable trend can be seen.

However, the mean daily page - visit ratio for Feb. is only 2 pages per

visit. Clearly, the majority of visitors (inc robots) are not trawling the site

across many pages.

Key points

-A trend CAN be assumed from the data.

-The diff. in hits/files represents how many 'regulars' the site has. Robots can

indeed also be regular visitors, which further complicates matters.

-The most important numbers are arguably average visit and page total numbers.

Also, the page-visit ratio is important to calculate.

Robots - implications for

visitor data

Search engines, using automated 'robots' which trawl the net's millions of

websites and indexing billions of pages, can really make web visitor statistics

very much more harder to analyse.

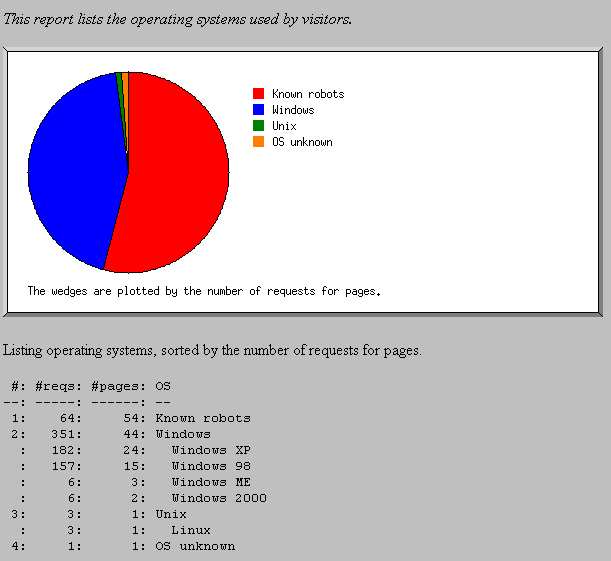

As the following screenshot shows,

visitor data for Mar 2'nd 2003 - for Calrissian.com.

With 111 total pages requested on that day, 54 pages were due to known robots !

Calrissian.com, being a new site has VERY small visitor numbers, and there are

times when more up to 75% of all pages requested are not even by real people !

Clearly, young and small web sites will look more bizarre in this way, than the

large global net sites.

Typically, i have found that for a web site with less than 100 visitors a day,

it is likely that on average 20% will be robots on an average day. In my

experience the range can be as low as 5% or as high as around 90%. Naturally the

number of robot visitors will depend upon how well search engines have managed

to discover that the website exist. It may take a number of months for most

mainstream search engines to even catelog the index/home page for a

personal/small scale website.

Summary : In the early days of a website, robots may well make up a considerable

% of all web visitors.

Net links

Webalizer Quick

help guide : For all uses of this web stat program, this link will provide

most of the info. any webmaster will require.

Performance Indicators

for websites : An excellent summary article on all key issues, by B. Kelly,

Uni. Bath, UK

A good webmaster will want to know

who is visiting their site, what pages they visit, etc. However, just reviewing

a few raw total numbers like hits and total visits is simply not adequate enough

for gaining even a rough understanding. The important thing is the more data the

better when forming any level of analysis.

Web robot visits are particularly important to consider, they can easily distort

visitor data. Such robots are more of a problem for small scale web sites, where

the proportion of robots to real people can be VERY high.

Key points

-Hit counters on web pages are a notoriously unreliable means of forming

judgement on the success/popularity of a website.

-Suggestions : Hit counters should rarely if ever be used on webpages.

If web visitor data is presented on a website, the data should be presented at

least in a fair manner with some background/history info.

-Web robots must be considered when forming any appreciation of web visitor data.

Last updated :

08/10/04